AI Has a Public Perception Problem

How the messaging around AI has gone wrong and how we might fix it

I’m Tanay Jaipuria, a partner at Wing and this is a weekly newsletter about the business of the technology industry. To receive Tanay’s Newsletter in your inbox, subscribe here for free:

Hi friends,

AI has a public perception problem and increasingly the data on this is becoming somewhat embarrassing.

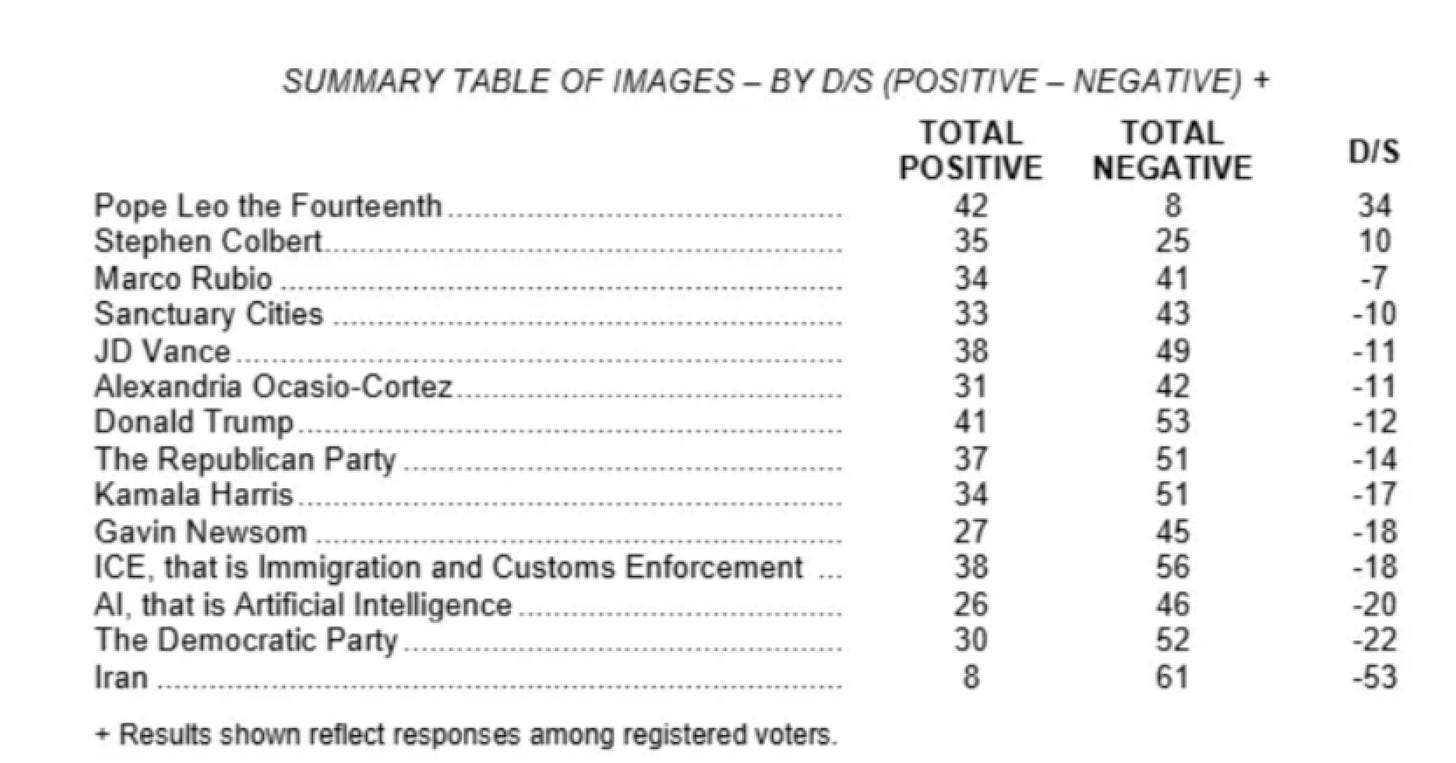

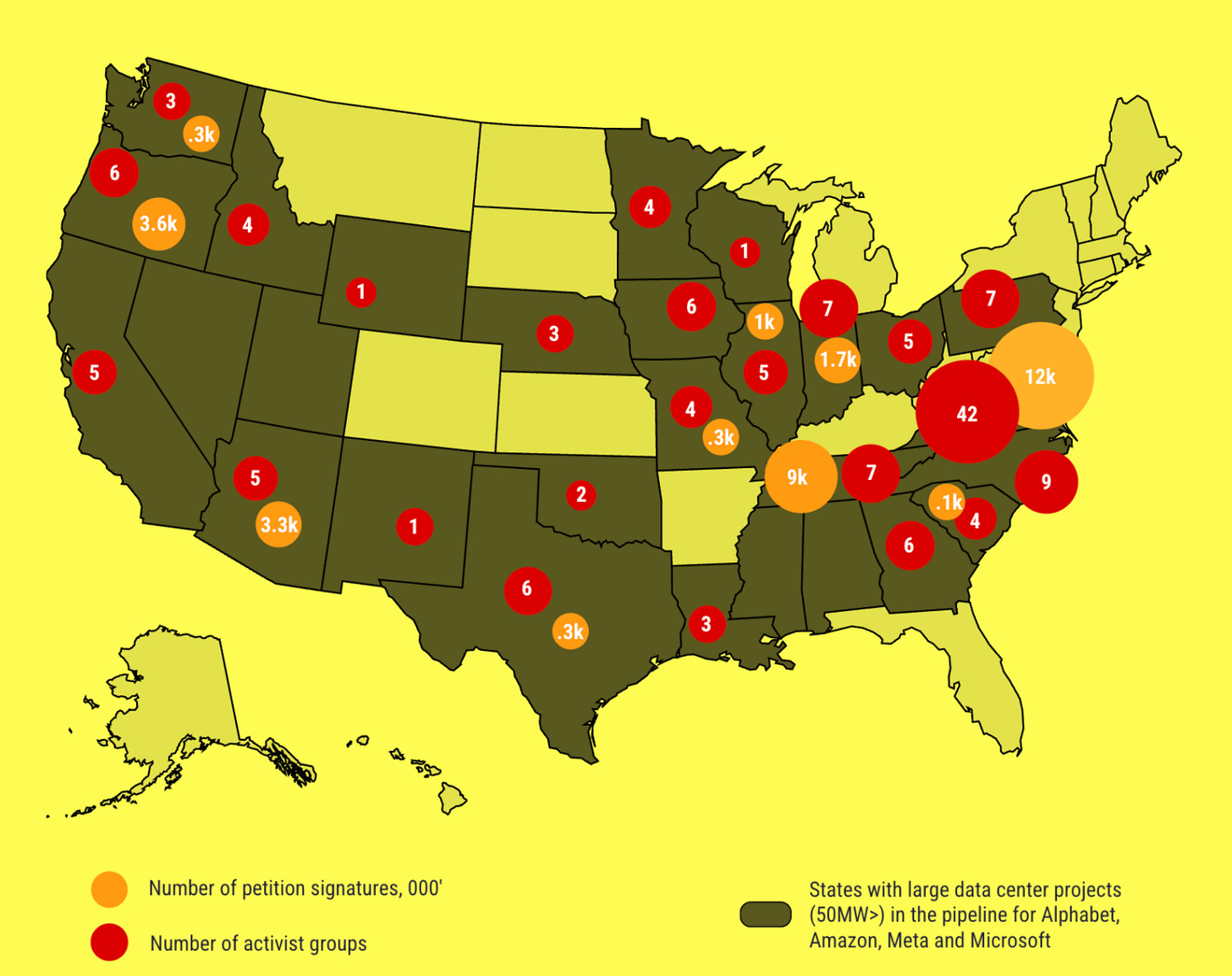

Half of Americans are more concerned than excited about AI in daily life, per Pew’s June 2025 survey, up from 37% in 2021. Only about 10% are more excited than concerned. An NBC poll last year clocked AI’s net positive view at 26%, which makes the technology less popular than ICE. Over $50B of data center projects have been blocked or delayed by local opposition over the last 18 months. There are over 100+ activist groups across the country specifically to fight data center construction.

I know this topic is a bit different than I usually cover but is important enough that it’s worth going into.

In this piece, I’ll cover:

The shape of the backlash

The issues with the current messaging

The case the industry should actually be making

I. The shape of the backlash

The fear around AI is broadly around three things.

The first is jobs. Dario’s “AI could eliminate half of all entry-level white-collar jobs in 1-5 years and push unemployment to 10-20%” line has unfortunately becoming perhaps the single most-cited AI soundbite of the past year. In a Mercer survey of 12,000 workers globally, the share worried about losing their job to AI jumped from 28% in 2024 to 40% in 2026. A Resume Now survey found 60% of workers expect AI to eliminate more jobs than it creates this year, and 51% are personally worried about their own job.

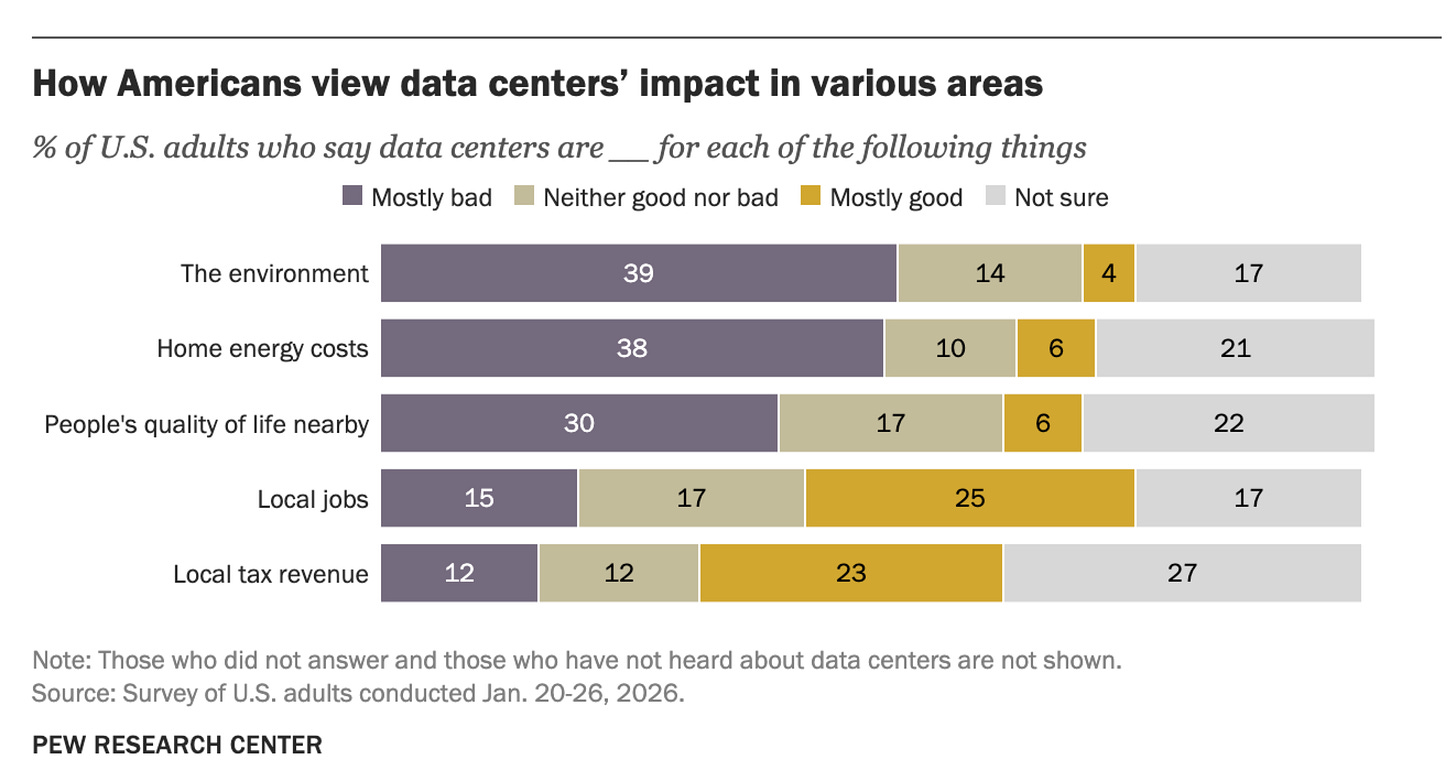

The second is data centers and the environmental story. People associate AI with rising electricity bills, water use, and noisy industrial sites near their homes as the graph below shows. A Heatmap poll found only 44% of Americans would welcome a data center in their community, which makes them less popular than gas plants and nuclear.

The third is a more diffuse fear about AI itself. Safety, kids using chatbots, deepfakes, AI psychosis, autonomy and more generally “the unknown.” AI’s favorability rating is lower than ICE.

What’s striking is that this is the case despite ChatGPT being the fastest-adopted consumer product in history. People are using AI more but they just don’t trust it. And they don’t fully trust the people building it.

II. The messaging today

There’s a much more optimistic story to tell here but the story making rounds is a quite different one.

Dario’s pitch (and to be fair, parts of Sam’s) reads roughly like: AI will be the most powerful technology ever, half your jobs are gone, unemployment goes to 20%, and oh by the way, in the same breath, cancer gets cured and the economy grows at 10%. The optimistic part is real and discussed in Machines of Loving Grace (probably the best optimistic case any AI CEO has put in writing). But the TV soundbite that goes viral “half the white-collar jobs are gone.”

Axios called this “fear-profiting” in March. Namely, framing AI as immensely powerful and dangerous reinforces that only a few well-resourced labs can build it safely. That’s an effective fundraising message and a terrible consumer message.

The industry has spent two years training the public to believe two things at once:

AI will replace your job, soon, and there’s nothing you can do

AI is a generational technology that everyone benefits from

These are not inherently contradictory (they can both be true in the long arc) but as a pair of talking points delivered by the same people in the same week, they read as a threat. If you’re a 45-year-old radiologist or paralegal or junior accountant watching the clips, you don’t hear “the economy grows at 10%.” You hear “you’re getting fired.”

The data centers piece adds to it. The local political story for the average voter is my power bill went up, my water table is at risk, my kid’s commute got worse, and the people benefitting are tech billionaires who are also publicly bragging about putting my neighbors out of work.

The other aspect is that while AI is indeed a reason for some job displacement, many companies are blanket using that as an excuse for large layoffs stemming from periods of excess and overhiring.

Marc Andreessen noted this on a recent podcast with Harry Stebbings:

“The hiring binge that companies went on in COVID was just wild. Essentially every large company is overstaffed … Now they all have the silver bullet excuse, ‘Ah, it’s AI.’”

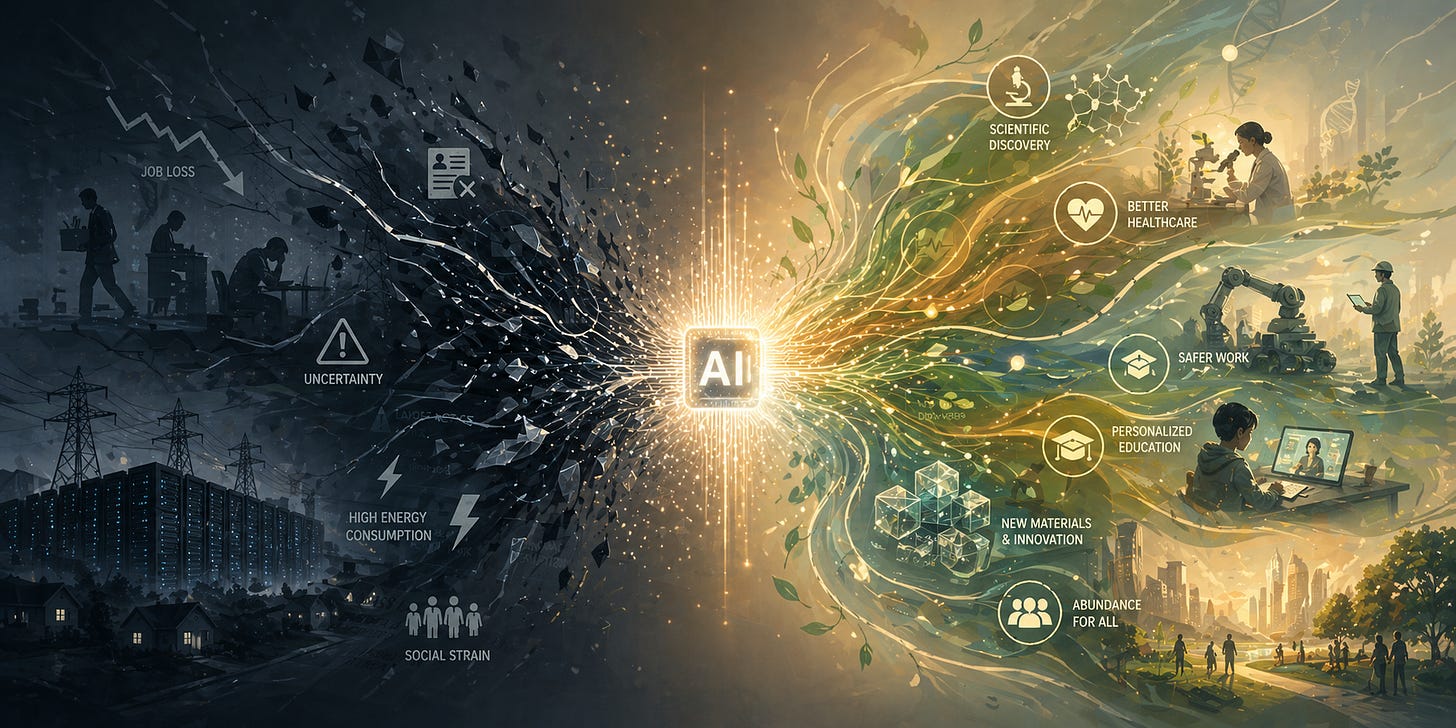

III. The more optimistic messaging

There’s a much better story sitting right there and it often is taking a backseat if not being ignored completely, including:

AI as the end of drudgery. Every job has a part of it that nobody actually wants to do. Filing claims, drafting boilerplate, parsing PDFs, answering the same email for the 200th time. The honest pitch is that AI eats the part of work people hate. This is also closer to what’s actually happening in most enterprise deployments. today.

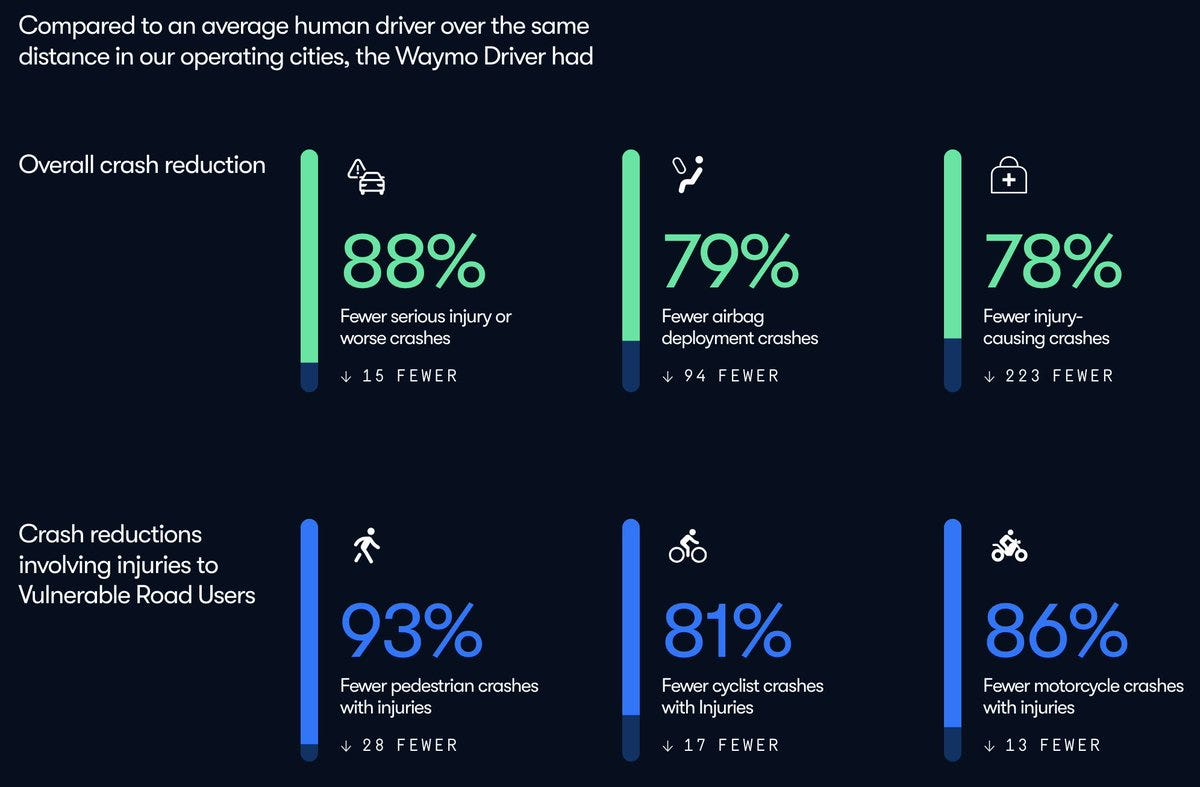

AI as the end of dangerous work / human error. Mining, long-haul trucking, hazardous materials handling, certain kinds of agricultural and industrial work where injuries and deaths are still routine. About 5,000 Americans die at work every year, and most of them are not white-collar workers. Robotics plus AI is one of the few things that actually moves that number. It’s still relatively early in Robotics so this isn’t quite a major talking point yet, but can be one as Robotics improves. 40,000 people in America die every year from automobile accidents, and Waymo’s initial data shows ~90%+ decreases in accidents on a miles traveled basis. Stories of accidents prevented is a better one than a junior lawyer let go.

AI as actual democratization of expertise. Products like ChatGPT, initially at $20/mo and now basically for free have actually democratized access to infinitely patient tutors, a second opinion on any medical issue, a therapist and a life coach. That is genuinely new in the sense that these versions of expertise have always been gated and expensive. These products and use cases largely work today, but haven’t been the major messaging points compared to AGI timelines.

AI for discovery. AI is going to enable us to discover novel drugs, novel materials and cure diseases we didn’t think were curable. Three of the last two years’ Nobel Prizes went to AI work. The “AI for discovery” story is perhaps the most optimistic and most abundant view of the benefit of AI and has been largely underplayed compared to labor displacement. Yes, we’re still relatively early in terms of progress on AI scientists and AI drug and material discovery but is a story worth amplifying.

Closing Thoughts

Some of you may rightfully retort that people like Dario do talk about various things I’ve mentioned. But that’s primarily in his long-form essays, and not the most repeated points relative to existential risk and labor displacement. Another valid counterargument is that they are just doing their part to warn people about what’s coming, which is fair. But at the same time, it’s important for these leaders to paint the optimistic case of the age of abundance or risk us never getting to that outcome because of bans driven by the backlash.

The talking points in every interview should include the most optimistic messages of AI as well - discovery, democratization and no more drudgery and dangerous work.

AI is the most powerful technology of our generation and could be the most popular. But today only 26% of voters view it positively. The gap between those two is at least partially a messaging problem, and it’s fixable.

If you have any comments or thoughts, feel free to tweet at me or email me on tanay at wing.vc